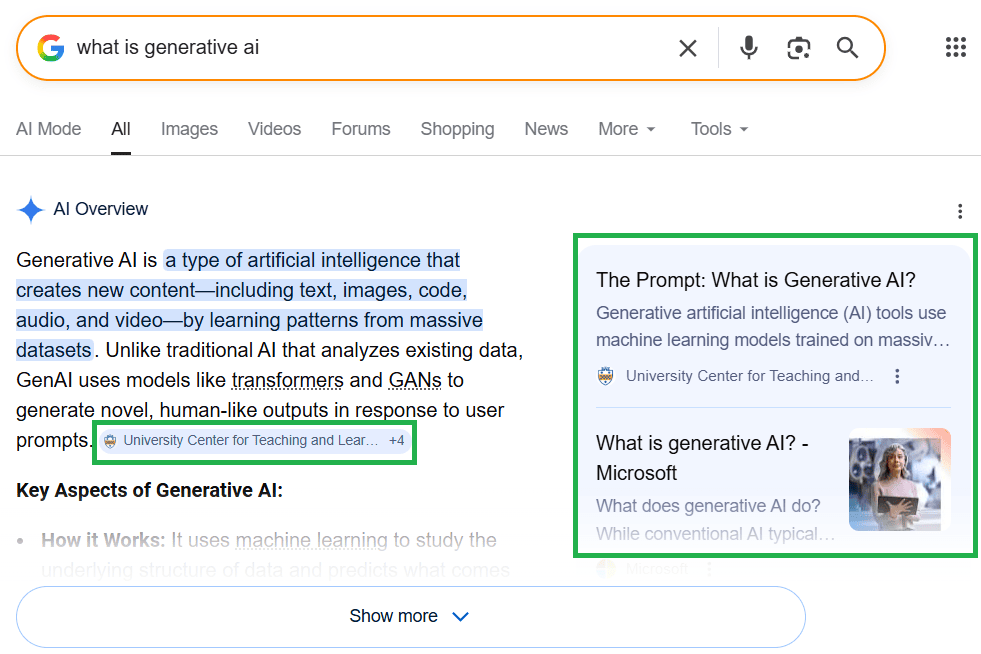

With the advent of generative AI, the way people search for information has changed dramatically. We still have traditional search results with blue links, but AI-generated answers are rapidly expanding in various forms and formats.

The web is currently drowning in articles about AI, but many of them don’t actually explain what specific changes to make to your website to show up in AI-generated answers.

In this article, we’ll cover the essential AI search engine optimization theory and provide practical recommendations on how to get your site cited by AI agents.

What is AI Search Engine Optimization?

AI Search Optimization is the process of making your website’s content easy for AI agents to understand and trust, so they choose to cite your site as a source in their generated answers.

You might also hear terms like Generative Engine Optimization (GEO) or Answer Engine Optimization (AEO). Regardless of the name, the goal remains the same: make your site a source for AI-generated responses.

What is AI Agent?

An AI agent is an advanced system designed to accomplish complex tasks (queries) on behalf of a user. Unlike traditional search engines, which primarily find information and provide a list of links, AI agents synthesize information to answer complex queries directly. These agents are powered by Large Language Models (LLMs), which allow them to generate natural, human-like responses.

The first publicly available AI agent was ChatGPT, launched by OpenAI in late 2022. In March 2023, Google introduced its own competitor, Bard, which was later rebranded as Gemini. Today, Gemini powers AI Mode – a dedicated conversational search interface – and generates AI Overviews directly at the top of traditional search results.

Other popular agents include Perplexity and Microsoft Copilot, the latter of which integrates generative AI directly into the Bing ecosystem.

How AI Systems Work

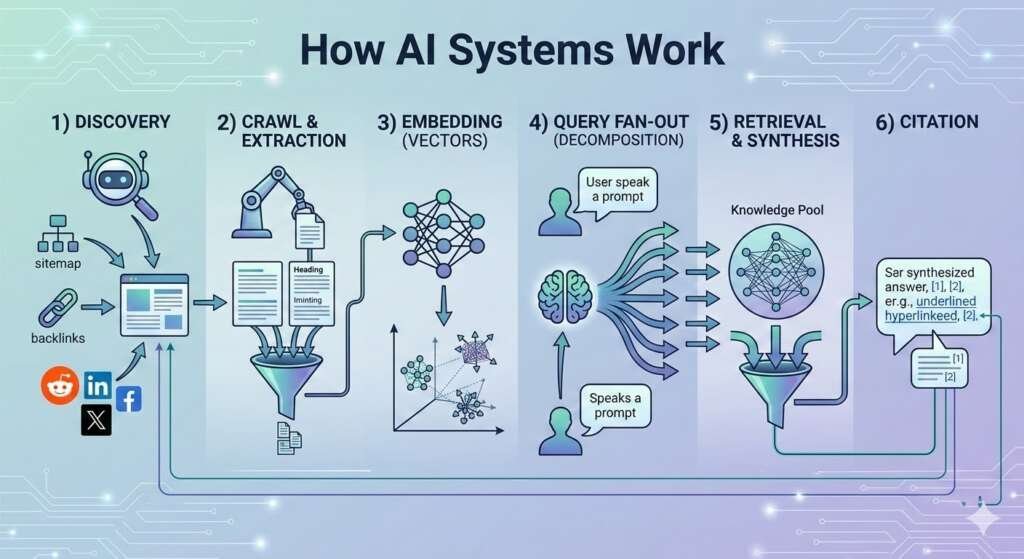

AI agents don’t rank websites in the traditional sense. Instead, they perform a process called Retrieval-Augmented Generation (RAG). This means that AI agents identify the best individual pieces of information from across the web to build a custom, synthesized answer.

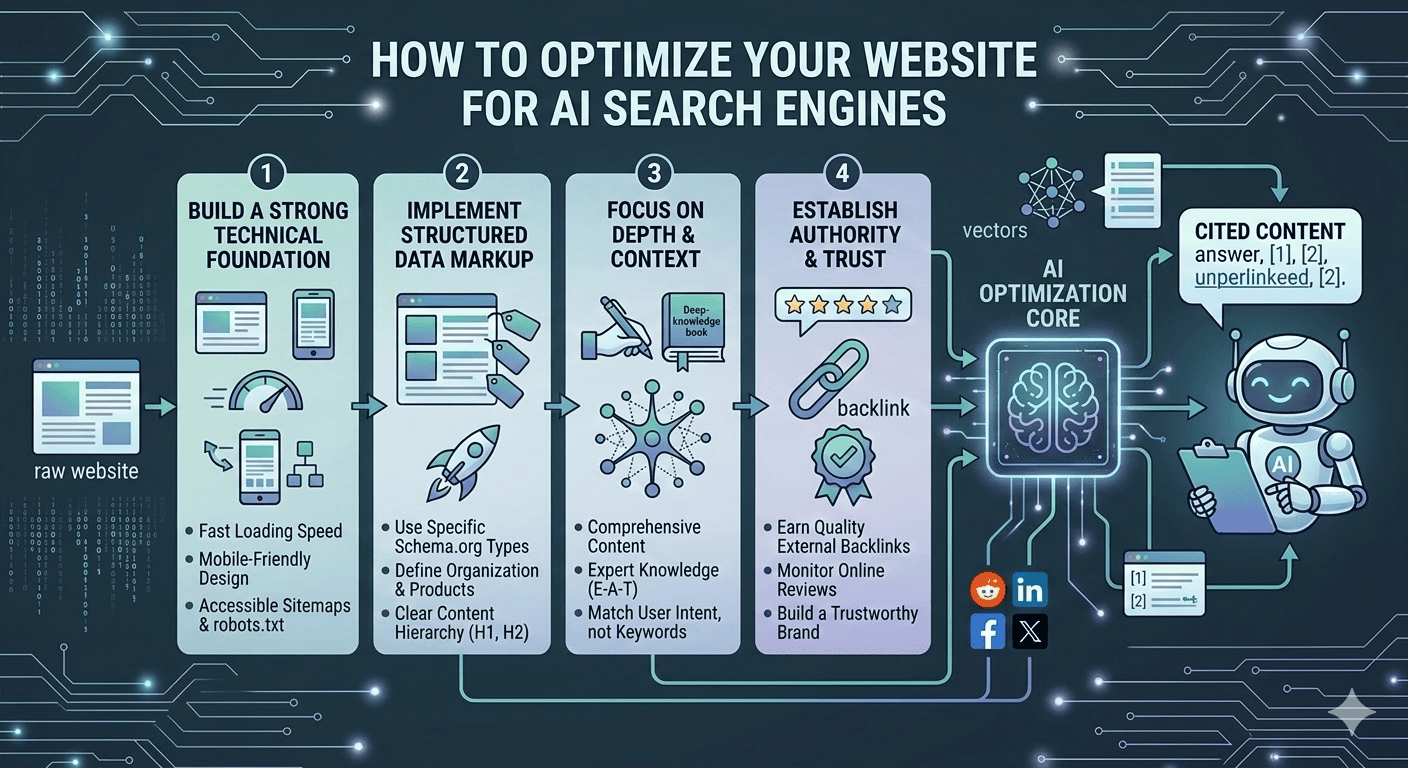

Here is the sequence of actions performed by AI agents:

- Discovery: The AI agent finds your content via sitemaps, backlinks, or social signals.

- Crawl & Extraction: The bot reads your page. It prioritizes text that is easy to parse, looking for clear “chunks” of information.

- Embedding: Your content is converted into vectors – mathematical representations of meaning. This allows the AI to understand that your content is conceptually related to a topic, even if the keywords don’t match perfectly.

- Query Fan-Out (Decomposition): When a user enters a prompt, the AI doesn’t just search for those specific words. It “fans out” the request into multiple related sub-queries to explore different angles, definitions, and sub-topics simultaneously.

- Retrieval & Synthesis: The AI pulls the most relevant “chunks” of data triggered by those fan-out queries and merges them into a single response.

- Citation: Finally, the AI adds citation links to the sources it used to build its answer.

Because of query fan-out, an AI might cite your site not because you rank #1 for the main keyword, but because your content had the best specific answer for one of its sub-queries.

Pre-trained Knowledge vs. Live Search

To answer a user’s query, AI systems use pre-trained knowledge and perform live search (web browsing) based on the specific intent of the query.

AI systems use pre-trained data to answer general questions, provide creative insights, or explain historical concepts established during the model’s initial training phase. When a user asks about real-time data, such as breaking news, stock prices, or niche updates that occurred after the model’s knowledge cutoff (the date when an AI model’s training data ends), AI agents trigger a live search.

These approaches can be combined to provide the best, most up-to-date answer to a given prompt.

AI Hallucinations

All AI models are probabilistic, meaning they predict the next most likely word in a sentence based on probability. For example, if a user types “what is the highest…“, the AI predicts that the following words might be “mountain” or “paying job“. This occasionally leads to “hallucinations” – instances where the AI confidently presents entirely false information as fact.

Hallucinations typically happen when a model lacks specific data or prioritizes completing the answer over factual accuracy. This is why all facts in AI responses, especially specific statistics and niche details, must be verified for accuracy.

Comparison Table: Traditional SEO vs. AI SEO

Let’s explore how SEO has evolved after the integration of AI into core search algorithms.

To put it simply, AI Search Engine Optimization is a new, sophisticated “layer” on top of the existing SEO foundation. This means that SEO fundamentals haven’t gone away and remain important. If an AI agent can’t discover, crawl, or read your content, it will never be cited in AI-generated responses.

However, AI agents work differently from the traditional Googlebot. The table below summarizes what stays the same and what changes in AI SEO compared to traditional SEO.

Traditional SEO vs. AI Search Engine Optimization

| Factor | Traditional SEO Importance | AI SEO Importance |

| Crawlability & Indexing (URL structure, robots.txt, redirects, “noindex” tags, etc) | Essential: If a page cannot be indexed, it won’t appear in search results. | Essential: If an AI agent can’t discover a page, it can’t read and understand it. |

| Site Health (Duplicate content, broken links, canonical link issues, mixed HTTP/HTTPS content, etc.) | Medium/High: Depends on site size. For larger sites, technical health is essential to optimize the crawl budget and ensure a complete crawl. For smaller sites, issues like broken links or duplicate content primarily affect the user experience. | Moderate: AI agents can usually read your content even if a page has technical errors. However, duplicate content makes it difficult for AI to identify which page is the original data source. |

| JavaScript Dependency | Low Risk: Googlebot is highly proficient at rendering JavaScript. | High Risk: ChatGPT and Perplexity primarily crawl raw HTML. Content that requires JavaScript to load may be completely invisible to them. Microsoft Copilot is generally good at JavaScript rendering. Google Gemini can render JavaScript well because it shares the same infrastructure as Googlebot. |

| Site Speed and Core Web Vitals | Low/Moderate: A confirmed ranking factor, but rarely the primary one, acts more like a tie-breaker. | Medium: AI agents often fetch pages in real-time. If your site takes too long to respond, the agent may terminate the connection and cite another source to avoid latency. |

| Schema Markup | Medium/High: Not a direct ranking factor, but necessary to get rich results (snippets) and increase click-through rates. | Essential: AI agents use Schema to identify entities, resolve ambiguity, and extract specific details from your content with high confidence. |

| Titles & Meta Descriptions | Medium: Primarily used to describe the page content in search results and improve click-through rates (CTR). | Medium: AI agents use page titles for source cards and citations. Meta descriptions are less critical, as AI can generate its own page summaries. |

| Heading Hierarchy (H1-H6) | Moderate: Improves readability and helps crawlers understand page structure, but headings are not an important ranking factor. | Critical: AI agents use headings to segment content into extractable chunks. Poor heading hierarchy prevents accurate extraction and significantly reduces the chance of being cited. |

| Internal Link Architecture | High: Essential for distributing “link equity” (PageRank) and ensuring all pages are discoverable by crawlers. | High: Establishes the semantic relationship between your pages, helping AI agents understand your authority on a given topic. |

| Anchor Text Optimization | High: An important signal used to help a page rank for specific, targeted keywords. | High: Provides semantic context for the destination page, helping AI agents understand exactly what information they will find there. |

| Image Alt Attributes | High: Important for accessibility (screen readers) and ranking in Google Image Search. | Moderate: AI can recognize images very well, but alt text provides the human-verified context that prevents recognition errors. |

| Site Credibility & Content Accuracy (E-E-A-T Signals) | High: E-E-A-T is not a ranking factor in the traditional meaning, but it provides a framework for Google’s algorithms that seek helpful and reliable content. | Essential: AI agents require verified facts and original research to provide reliable citations and minimize hallucinations. |

| Content “Chunking” | Low: Google can use “passage indexing” to identify specific paragraphs as the best answer, but it still primarily ranks the page as a single holistic unit. | High: AI agents extract individual “chunks” or paragraphs, often from different sites, to build synthesized answers. In order for content to be citable, it must be structured into sections. |

| Backlink Profile | Essential: High-quality backlinks are one of the most important ranking factors for traditional search engines. | Essential: Backlinks are also important for AI systems. If your content lacks high-quality backlinks, AI agents may lack the necessary evidence to trust and cite your information. |

| Off-Site Mentions & Social Signals (Reddit, Forums, etc.) | Moderate: Mentions and social signals are not direct ranking factors; they primarily drive brand awareness and can increase branded organic traffic. | Important: AI agents track brand mentions across the web (even without links) to verify your real-world reputation and brand authority. |

To recap, traditional SEO is based on ranking signals used to optimize websites to make them more visible in search results. For AI Search Engine Optimization, technical SEO remains essential, but you also need to ensure that site content is structured and verifiable for AI agents.

How to Track AI Visibility

AI visibility can’t be measured like traditional search rankings. Traditional rank tracking – monitoring your site’s position for a specific keyword – no longer works in AI-powered search. According to research, AI recommendation lists repeat their exact order less than 1% of the time. If you ask an AI agent the same question twice, you will likely get two different lists.

This happens because AI models are probabilistic, not static. They vary their outputs based on:

- Stochasticity: AI systems are designed to vary their word choice to sound natural. They choose the next word based on probability, which means they rarely generate the exact same sentence or list twice.

- Contextual Nuance: Even a tiny change in how a user phrases a prompt can lead the AI to retrieve different “chunks” of information.

Therefore, to track AI visibility, we need to use metrics that measure how often your site appears across various AI-powered responses.

Key Performance Indicators in AI SEO

These metrics measure how effectively AI agents recognize and utilize your content:

1. Citations

This metric measures how often an AI agent provides a direct link to your website. Citations often appear as inline numbers, footnotes, or linked text within AI-generated responses.

Citations are important because they show users that answers are powered by reputable sources, allowing them to verify accuracy and transparency.

2. Mentions

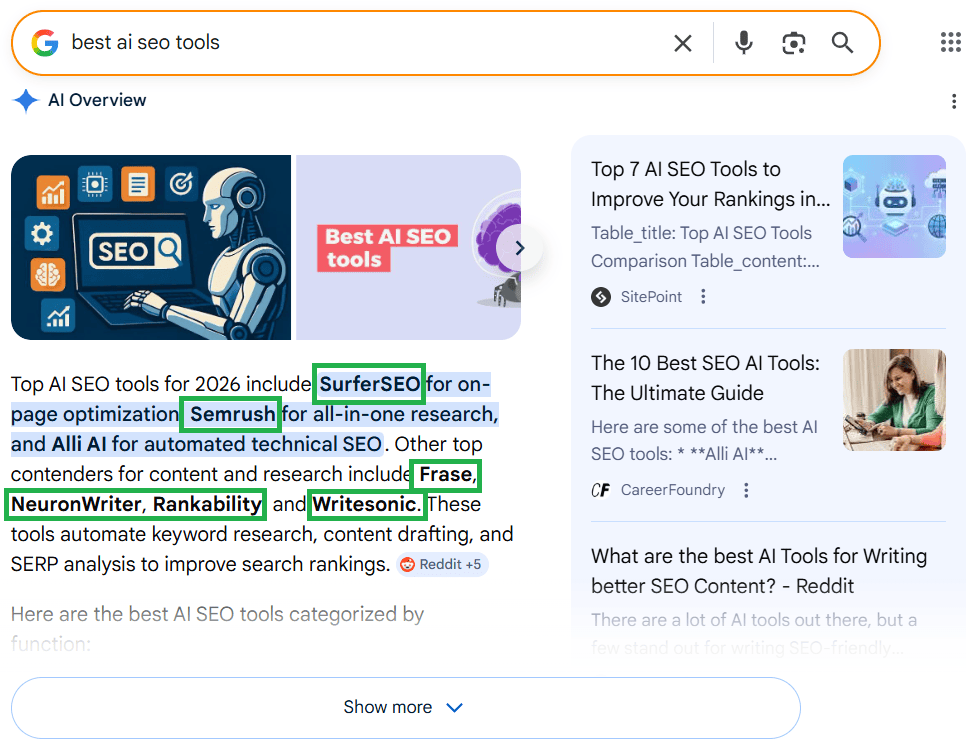

This metric measures how often an AI agent mentions your product, service, or brand, even without a link. Mentions can appear in lists, comparisons, or recommendations.

Mentions signal to users that your brand is a recognized authority within its niche.

3. Share of Voice (SOV)

SOV measures your brand’s presence across an entire topic. This is the percentage of generative responses in your industry that include your brand versus your competitors.

For example, if users ask an AI 100 different questions related to “best AI SEO software,” your share of voice shows what percentage of those answers include your brand.

4. Sentiment

Sentiment tracks the tone in which your product, service, or brand is discussed: positive, negative, or neutral. This metric is important because AI models use sentiment to decide which entities to recommend. Consistent negative sentiment can cause an AI agent to stop suggesting your brand (product, service) to users entirely.

AI Search Engine Optimization Best Practices

The following recommendations will help maximize your site’s visibility in AI-generated answers across all AI-powered search experiences.

Ensure AI Agents Can Access Your Content

AI agents must first be able to discover and crawl your site before citing it in their answers. They generally respect ‘noindex’ tags and robots.txt directives for real-time retrieval. If a page is blocked from being crawled or has a ‘noindex’ tag, AI will skip it when looking for citations.

However, it is important to note that these directives do not remove content from an AI’s existing training data. If an AI model used your content for training before you added the ‘noindex’ tag or blocking rule to robots.txt, it can still “remember” and use that information in a response, although it is less likely to provide a citation link.

To maximize visibility, ensure your pages are indexable and that you haven’t blocked specific AI crawlers in your robots.txt file.

Minimize JavaScript-dependent content in the initial HTML

Some AI agents like Gemini can execute JavaScript, but many popular ones, such as ChatGPT and Claude, primarily read the raw HTML response. If your core content only appears after several scripts load, these AI agents will likely miss it.

Therefore, prioritize Server-Side Rendering (SSR) to ensure your important text is present in the initial HTML response from your server. This allows AI crawlers to crawl your content instantly without requiring the execution of JavaScript.

Implement relevant Schema Markup across the entire site

Use the most specific Schema type possible. For example, use Dentist instead of LocalBusiness for a dental clinic website. Implement Product, FAQ, Person, and Review markup wherever applicable.

Schema markup must exactly match the visible content on the page. Otherwise, AI agents may skip the markup entirely or view the information as untrustworthy.

Write Structured Paragraphs

AI agents often do not process a whole page when looking for an answer. Instead, they retrieve specific “chunks” of text. Try to write so that each individual paragraph can stand alone as a complete answer to a potential question (prompt).

Keep your paragraphs to 40-60 words and start with the most important fact. If an AI agent needs just one paragraph from your guide, make it easy for it to extract that paragraph without needing to scan the rest of the page for context.

Fix Your Heading Hierarchy

In traditional SEO, you could sometimes get away with a messy heading structure if the content was great. For AI agents, headings are much more important – they use them to chunk your content into logical segments. If your H2s and H3s do not follow a logical order, the AI may fail to understand where one thought ends and another begins.

Therefore, use only one H1 per page for the main topic. Use H2s for the main sub-points and H3s for details within those points. Never skip a level (e.g., jumping from H1 to H3), as this makes your data significantly harder for the AI to process.

Prioritize Facts, Statistics, and E-E-A-T signals

AI models look for verifiable data. If you mention a fact or statistic, cite the specific number and link to the original research.

Including verified data sends strong E-E-A-T signals to AI models. Expert-written content backed by verified data significantly increases your chances of appearing in generative responses.

Use Images & Video in Your Content

Modern AI agents are multimodal, meaning they can simultaneously process text, recognize images, and understand video content. This allows users to perform complex searches, such as uploading an image and asking specific questions about it. To succeed here, you must support your text with high-quality visual assets.

Include original, high-resolution images with descriptive alt text that explains the context of the image, not just the objects within it.

Use ImageObject and VideoObject Schema to provide AI agents with explicit metadata (such as thumbnails). For video, always provide a transcript to ensure the AI can index the spoken content.

If possible, add unique visuals, such as comparison charts, that the AI cannot find elsewhere. This makes your page a high-priority source for citations.

Build Off-Site Authority

If someone searches for information related to your brand (service, product), AI agents don’t just look at your site – they cross-reference your claims with the rest of the web.

According to SEMRush research, AI agents place particular importance on what people say on Wikipedia, Reddit, Quora, and industry-specific sites. So if your brand, product, or service is frequently mentioned on these platforms, it has a significantly higher chance of being mentioned in AI-generated responses.

Encourage reviews, participate in industry forums, and ensure your brand presence is consistent across the web.

Conclusion

The way people search for information has evolved from typing keywords and clicking blue links to writing complex prompts and receiving synthesized AI-generated answers. With this shift, the goal of SEO has moved from ranking for keywords to being cited and mentioned by AI agents.

Success in AI Search Engine Optimization still relies on traditional SEO practices, but requires additional optimization efforts specifically for LLMs. Start optimizing for AI search today to ensure your brand remains visible across the AI-powered search experiences of tomorrow.

For more insights on how to use AI tools for SEO tasks, read the guide on generative AI for SEO.